|

take a look at my instuctions above, its for windows. in a nutshell what i did was go to the batch file called C:\Program Files\CodeProject\AI\setup.bat. within that batch file i changed a line of code from false to true. after saving the file go to the folder that contains the module you need to work, in my case it was object detection yolov5-6.2. so from the command prompt as admin i went to this folder: C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2 then from that folder call the setup.bat file. so do something like this command from the command prompt line:

C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2 C:\Program Files\CodeProject\AI\setup.bat

of course all on one line. try it and see what happens.

|

|

|

|

|

Try uninstalling / reinstalling the module

cheers

Chris Maunder

|

|

|

|

|

My dream is to create a custom AI model to identify make/model/color of vehicles.

I have zero AI experience.

I installed CodeProject and tested the built in modules, works well.

Thought I would follow 'How to Train a Custom YOLOv5 Model to Detect Objects' which is a tutorial on this site.

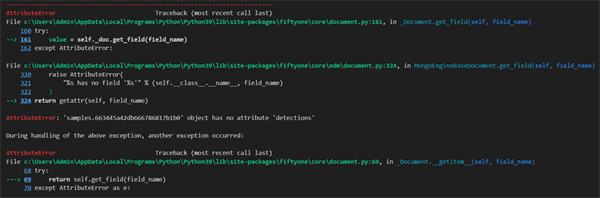

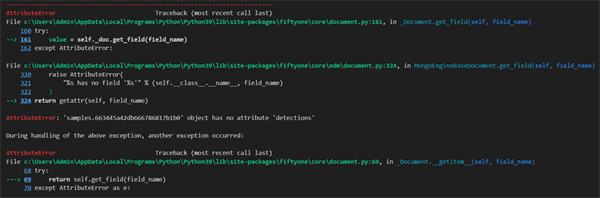

No luck, I can't even get to the training part. It crashes complaining a sample has no detections, but having no experience with this I can't figure out what the issue is.

If this tutorial outdated? Should it still work? Can someone suggest a tutorial that actually functions?

|

|

|

|

|

This is a demo I've been wanting to write for months and months. The short version is you should use a car make/model AI model as a custom mode (eg This YOLO model) to get the make/model. I would then also run an image segmentation (available in our current YOLOv8 module) to get the polynomial shape of the car, then run a quick image analysis to get a histogram of the colours in the cutout of the car. Choose the most prominent colour and you're done.

cheers

Chris Maunder

|

|

|

|

|

LOL, it sounds so easy.

So far I have managed to create a small test model, using 333 images. I created the model with YOLO5 on my computer.

I've been trying to figure out how to get it into codeproject, but no joy so far.

I found a custom models path at C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\custom-models

I dropped the vehicle-makes.pt file there and when I access the explorer built into code project, there is a section called Object Detection (YOLOv5 6.2) and you can choose any of the models in that custom-models directly.

However, when I select my model and feed it an image it generates an error:

04:00:10:Object Detection (YOLOv5 6.2): [ModuleNotFoundError] : Unable to load model at C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\custom-models\vehicle-makes.pt (No module named 'dill')

04:00:10:Object Detection (YOLOv5 6.2): Unable to create YOLO detector for model vehicle-makes

No idea what no module named 'dill' means...

|

|

|

|

|

dill is used store and load Python objects (including an entire AI interpreter).

How did you create your model?

cheers

Chris Maunder

|

|

|

|

|

Can someone help troubleshoot this error? The short of it is this happens when I try to use CodeProject with my BI system's GPU (Intel iGPU) this error comes up in the logs:

ObjectDetectionYOLOv5Net.exe: 2024-05-02 18:31:29.4590658 [E:onnxruntime:, inference_session.cc:1799 onnxruntime::InferenceSession::Initialize::::operator ()] Exception during initialization: D:\a\_work\1\s\onnxruntime\core\providers\dml\DmlExecutionProvider\src\DmlGraphFusionHelper.cpp(432)\onnxruntime.DLL!00007FFE2712F11B: (caller: 00007FFE270B44E6) Exception(3) tid(2154) 80004005 Unspecified error

If I downgrade back to older versions (I believe it was older than CodeProject AI 2.3.4) I am able to use the GPU. Blue Iris support basically told me to come here for assistance. Any help with this is appreciated!

|

|

|

|

|

We absolutely need your system info (see pinned message) so we can start to suggest solutions.

cheers

Chris Maunder

|

|

|

|

|

Hi Chris,

Here is what you asked for. One thing I didn't think about before - according to this CP thinks my primary GPU Microsoft RDP. I use this to manage my BI machine but I'm not sure if this could be an issue:

Server version: 2.6.2

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: Intel(R) Core(TM) i3-4130 CPU @ 3.40GHz (Intel)

1 CPU x 2 cores. 4 logical processors (x64)

GPU (Primary): Microsoft Remote Display Adapter (Microsoft)

Driver: 10.0.19041.4355

System RAM: 8 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.18

.NET SDK: 7.0.408

Default Python: Not found

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

Microsoft Remote Display Adapter:

Driver Version 10.0.19041.4355

Video Processor

Intel(R) HD Graphics 4400:

Driver Version 20.19.15.5063

Video Processor Intel(R) HD Graphics Family

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 52 KiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Hi,

I recently set up code project to work with Blue Iris. Blue Iris is running on Windows 11 in a Proxmox VM.

I have successfully passed through a coral USB to the VM and CPAI. Everything seems to work fine for about 10 minutes then CPAI seems to revert back to CPU. The coral is still present in the device manager. I've turned off any USB power management in Windows to no avail.

Any Suggestions would be greatly appreciated.

|

|

|

|

|

Is the Coral device getting hot? Does the system have enough memory? Are you using USB driver errors?

Coral on Windows is not the most stable of products I'm afraid. I always recommend other modules if running on Windows

cheers

Chris Maunder

|

|

|

|

|

Forgive me if I missed this being posted somewhere already.

I want to setup a central AI server in our data center. I want our developers to be able to direct their projects to that central server for testing. When I try testing to the machines ip address with port 32168 yields no connection.

http://machine ip:32168/

Seems simple but I'm missing something.

A similar question was posted with no answer. "how to connect a Blue Iris machine to another machine running CodeProject AI?"

This is not a Blue Iris question, just a reference above.

modified 2-May-24 15:35pm.

|

|

|

|

|

Well, assuming successful installation, that should work.

In my case, 192.168.50.17:32168 connects to a Linux (Debian) system running AI server in a Docker container.

If you are doing an install to Windows, did you use the script?

Did you get any errors during install? Any errors in system logs?

>64

It’s weird being the same age as old people. Live every day like it is your last; one day, it will be.

|

|

|

|

|

Have you opened port 32168 for HTTP (in and out) on your server's firewall (and possibly also on your developer's firewalls?)

cheers

Chris Maunder

|

|

|

|

|

Thank you, I did not take the time to trace this out.

I found a second layer that was blocking the port.

Juggling too many things at once.

Thank you all for the support.

|

|

|

|

|

Is it better to run CodeProject on the same Windows PC Blue Iris is running, or run it on a virtual machine running Docker?

|

|

|

|

|

Probably depends on which machine has the better GPU, if you are using same. We run with CPAI on a VM (Debian with Docker) because the system with the virtual machine has better video card (Linux is a little leaner). You do have to have a virtual host that allows PCI pass-through to use the video card, we run on ESXi. In the earlier days, it seemed to be easier when doing CPAI updates. If our BI system had a better video card, I would run "native".

Just my $0.02.

>64

It’s weird being the same age as old people. Live every day like it is your last; one day, it will be.

|

|

|

|

|

The PC I am using has an older GPU GTX960 and I was told I cannot use CUDA since my GPU does not support it. So I've only been using Yolov5.net.

I have an AMD Ryzen 5700G 8 core with 16GB RAM 3600mhz. Would I benefit of running CP on a virtual machine?

|

|

|

|

|

Again, if the VM is on another PC that has faster performance, it could be of benefit. Keep in mind there is overhead in the networking.

It is pretty easy to set up the VM and do a test. It is only a small configuration change in the BI system once you work out the Docker set up. Then look at the ms alert times.

>64

It’s weird being the same age as old people. Live every day like it is your last; one day, it will be.

|

|

|

|

|

What would be an acceptable speed for alerts?

Just want to know what is considered normal or too slow.

thanks.

|

|

|

|

|

I consider mine mediocre at best, 60-80 msec. But I run an old low memory video card P620, only 2GB.

That seems to do the job, mostly we filter for false alerts due to shadows.

We plan to upgrade it although we only use AI on 5 of 14 cameras.

>64

It’s weird being the same age as old people. Live every day like it is your last; one day, it will be.

|

|

|

|

|

Maybe I'm looking at the Status logs wrong, but mine only shows ms when it doesn't find anything.

When it does detects and triggers, there is no ms at all. Is this normal?

For example,

as you can see, it detected a person 88%, but no ms...then below it found nothing and alert cancelled, but it shows 238ms.

|

|

|

|

|

on the alert page, select "save AI analysis details."

Open the log file. Make sure you select "save to file".

>64

It’s weird being the same age as old people. Live every day like it is your last; one day, it will be.

|

|

|

|

|

It shows person 92% at 236ms. That seems very slow then. I am using Nvidia as HA since I have the GTX 960 card, but it appears it's not doing anything.

Something has to be wrong with my settings or something. Isn't using the graphics card for HA supposed to help alerts be faster?

|

|

|

|

|

This is a Blue Iris issue, send an email to Blue Iris Support describing the issue.

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin